Human Oversight in AI

Human oversight vs. competent human oversight

Understanding the critical distinction between having a human present and having the right human in the right role, with the right knowledge to govern artificial intelligence systems.

Executive Summary

As organizations increasingly deploy artificial intelligence across critical decision-making processes, the question of human oversight has moved from a philosophical debate to a regulatory imperative. Frameworks such as the EU AI Act, ISO 42001, and NIST AI RMF all require meaningful human control yet none of them define what 'meaningful' truly looks like in practice.

This whitepaper examines a distinction that is too often overlooked: the difference between human oversight - where a person is simply present in the loop - and competent human oversight, where a qualified, trained, and contextually aware individual is actively capable of understanding, challenging, and if necessary, overriding AI system outputs.

The consequences of conflating the two are significant. An organisation that relies on nominal human presence without ensuring genuine competence risks regulatory exposure, poor governance outcomes, and in high-stakes domains real harm to individuals and institutions.

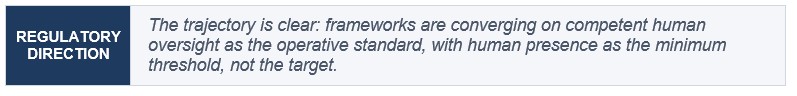

Human oversight is the floor. Competent human oversight is the standard that governance frameworks are increasingly moving toward and the one organizations should design for.

1. Defining Human Oversight

Human oversight refers to the involvement of a person in monitoring, supervising, or intervening in decisions made or supported by an AI system. In its most basic form, it means that a human is 'in the loop', that the system does not operate in a fully autonomous, unchecked manner.

What it focuses on

• Ensuring that AI decisions can be reviewed or overridden before they take effect

• Providing a clear line of accountability for AI-assisted outcomes

• Preventing fully autonomous AI behavior in high-risk or sensitive contexts

Examples in practice

• An IT administratorreviewing AI-generated access control recommendations before privilege changes are applied to user accounts

• A security analyst checking alerts triaged and prioritized by an AI-driven SIEM before escalating or closing incidents

• A systems engineer confirming an AI-assisted change management recommendation before it is approved and deployed to production infrastructure

Strengths

• Adds a layer of ethical and legal accountability to automated decisions

• Reduces the risk of fully autonomous harm reaching end users

• Relatively straightforwardto implement, widely adopted as the baseline requirement across most AI governance frameworks

Limitations

• The human present may not understand the underlying AI system, its training data, or its failure modes

• Without meaningful engagement, oversight quickly becomes a rubber-stamping exercise, providing the appearance of control without substance

• Introduces process friction without necessarily improving decision quality

2. Defining Competent Human Oversight

Competent human oversight goes further. It requires that the human involved in reviewing or governing an AI system is qualified, trained, and genuinely capable of understanding and critically engaging with the system's outputs. It is not enough to have a person present. That person must be able to interpret what the AI is doing, why, and what its limitations are.

What it focuses on

• Skill, expertise, and context awareness appropriate to the domain and the system

• The ability to interpret AI outputs, confidence scores, uncertainty signals, and known limitations

• Making informed, critical decisions, not merely approving outputs because no obvious error is visible

Examples in practice

• A senior IT securityengineer reviewing AI-generated access control recommendations, not just the output, but understanding the model's logic, its known edge cases around privileged accounts, and whether the recommendation aligns with the organization’s least privilege policy

• A threat intelligence analyst who understands model confidence thresholds, known false-positive patterns, and alert fatigue risk when evaluating AI-prioritized SIEM incidents, able to identify when the model is likely to have misfired and why

• A change advisory board member with sufficient technical and compliance knowledge to assess whether an AI-assisted change recommendation meets release standards, architectural governance requirements, and applicable regulatory obligations, and to articulate the basis for their decision if challenged

Strengths

• Produces higher-quality decisions through informed critical thinking rather than passive review

• Enables detection of bias, model drift, and edge cases that nominal oversight would miss

• Provides genuine governance and control, the kind that regulators increasingly expect

Limitations

• Requires investment in training, tooling, and access to domain expertise

• Harder to scale across large organizations or high-volume automated processes

• Depends on the availability of appropriately skilled personnel, a resource that may be scarce in specialist domains

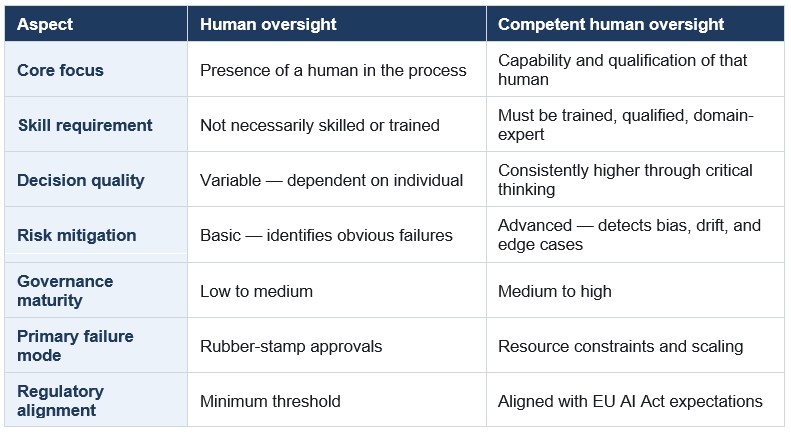

Comparative analysis

The table below summarizes the key distinctions betweenthe two models across governance dimensions relevant to organizations deploying AI systems in regulated or high-risk environments.

4. The Analogy That Clarifies the Difference

The distinction between human oversight and competent human oversight can be illustrated simply through aviation.

Human Oversight: A person is in the cockpit watching the autopilot. They are physically present and technically 'in the loop', but they may not understand what the autopilot is doing, why it has made the decisions it has, or how to intervene effectively if something goes wrong.

Competent Human Oversight: A trained, licensed pilot is monitoring the autopilot. They understand the system's logic, its known failure modes, and the conditions under which it should be overridden. They are not just present, they are ready and capable of taking meaningful control at any moment.

The difference is not academic. In domains where AI systems support decisions about credit, healthcare, hiring, law enforcement, or national security, the distinction between nominal and competent human oversight determines whether governance is real or performative.

5. Regulatory Expectations and the Direction of Travel

Regulatory frameworks are increasingly moving beyond a simple requirement for human presence. The EU AI Act, in particular, placessubstantive obligations on operators and deployers of high-risk AI systems that go well beyond the requirement to 'have a human check the output'.

What regulators expect

• Individuals overseeing AIsystems must understand their capabilities and limitations, not just in general terms, but in the specific operational context in which the system is deployed

• Organizations must define clear roles, training programs, and accountability structures for AI oversight functions

• Oversight personnel must be able to intervene effectively, meaning they possess both the authority and the technical competence to act when a system behaves unexpectedly or inappropriately

ISO 42001 (the AI Management System standard) requires organizations to demonstrate that oversight mechanisms are genuinely effective, not merely documented. The standard's emphasis on competence, awareness, and continual improvement makes implicit demands for competent human oversight across the AI lifecycle.

6. Practical Implications for Organizations

For organizations seeking to move from basic human oversight to genuine competent human oversight, the following actions are foundational:

6.1 Map oversight roles to competency requirements

For each AI system in deployment, define what competencies are required of the individuals tasked with oversight. This should include domain expertise, familiarity with the system's architecture and training data, and understanding of its known limitations and failure modes.

6.2 Invest in structured training

Oversight personnel should receive training that goes beyond basic AI literacy. Training should be specific to the system, the domain, and the risk profile and it should be refreshed when the system is updated or when new failure modes are identified.

6.3 Redesign oversight workflows

Many current oversight processes are designed for speed and throughput rather than genuine scrutiny. Where AI outputs carry significant consequences, workflows should be redesigned to give oversight personnel the time, information, and authority to engage critically, not just sign off.

6.4 Document competence, not just presence

Audit trails and governance records should capture not only that a human reviewed an AI output, but who reviewed it, what their relevant competencies are, and what considerations informed their decision. This provides the evidential basis for regulatory engagement and internal assurance.

6.5 Build escalation paths

Where oversight personnel identify concerns about specific outputs, systemic patterns, or model behavior, there must be clear escalation paths to technical, legal, or executive functions. Competent human oversight without escalation authority is incomplete.

Conclusion

The difference between human oversight and competent human oversight is the difference between compliance in form and compliance in substance. Organizations that treat the presence of a human in the loop as sufficient have met a minimum threshold, but they have not addressed the governance challenge that AI presents.

As AI systems become more capable, more opaque, and more consequential, the demands on the humans who oversee them will only increase.Investing now in the training, structures, and workflows that enable competent human oversight is not merely a regulatory response; it is the foundation of trustworthy AI governance.

Human Oversight = "Someone is there."

Competent Human Oversight = "The right person is there, and they know what they are doing."

Need help navigating your risk

Get in touch. We'd love to help.

Questions about risk, ISO, compliance, or AI?