Gaps in ISO AI Standards

Bridging the Competence Gap in ISO AI Standards

Why the Standards-to-Competence Pipeline is Structurally Weak and How to Fix It

SUMMARY

The International Organization for Standardization has moved quickly to introduce AI governance standards, most notably ISO/IEC 42001, in response to the rapid proliferation of artificial intelligence across industries and sectors. These standards represent an important step forward, providing organizations with a framework for governing AI systems responsibly and demonstrating that commitment to external stakeholders.

However, a critical and largely unaddressed problem persists. The pipeline that connects a published standard to real-world implementation capability is structurally weak. At every stage from interpretation through training, consulting, implementation, audit, and certification, clarity degrades and variability increases. The result is compliance that looks sound on paper but fails to deliver genuine governance maturity.

Compounding this structural weakness is a more fundamental challenge: AI is an extraordinarily complex domain that demands competence across a wide and intersecting spectrum of disciplines. It is not a subject that can be adequately addressed through a four- or five-day training course, whether delivered in person or online. The depth of knowledge required to govern AI systems responsibly, spanning machine learning, data science, ethics, cybersecurity, law, and organisational risk management, far exceeds what any introductory programme can provide. Yet the training market has not kept pace with this reality, and organizations are being certified on tbased one basis of competence that, in many cases, has not been genuinely developed.

This paper examines the root causes of this gap, explains why AI amplifies it, and sets out a practical model for closing it through structured,risk-driven interpretation and implementation.

THE STRUCTURAL PROBLEM

The Pipeline and Where It Breaks

A governance certification follows a broadly consistent path: a standard is published, practitioners interpret it, training programs emerge, consultants advise organizations, implementations are built, auditors assess them, and certification is awarded. At each stage, the original intent of the standard is subject to interpretation, assumption, and variability.

The problem is not that this pipeline exists, but that each stage introduces its own failure point:

— Standards are deliberately high-level and non-prescriptive, trading specificity for universal applicability.

— Training programs lag behind publication, often by years, and vary significantly in depth and rigor.

— Consulting engagements default to template-driven approaches that prioritize documentation over genuine capability.

— Implementations become misaligned with actual risk because the connection between standard requirements and business context is never properly established.

— Audits become subjective exercises where findings depend as much on the auditor's background as on the organization’s controls.

— Certification signals that a standard has been applied, not that the organisation is genuinely capable of governing its AI systems.

Certification becomes a proxy for capability without guaranteeing it.

WHY ISO STANDARDS CREATE THIS CHALLENGE

Designed for Universality

Standards such as ISO/IEC 42001 and ISO/IEC 27001:2022 are designed to be industry-neutral, globally applicable, and outcome-focused. This universality is their greatest strength and the source of their most significant limitation in practice. Because they must apply equally to a multinational bank, a public transport operator, and a healthcare provider, they define what is required without prescribing how it should be achieved. That translation, from requirement to control to implementation, is left entirely to the organization, its consultants, and its auditors.

The Market Readiness Assumption

This approach works when the market is ready to support it: when skilled practitioners exist in sufficient numbers, when interpretation is broadly consistent, and when mature implementation ecosystems have developed around the standard. For well-established frameworks such as ISO 9001 or ISO/IEC 27001, these conditions have largely been met over decades of application.

For AI governance standards, they have not. AI expertise is scarce and unevenly distributed. The practitioner community is small, and its interpretation of requirements varies widely. The consulting ecosystem is underdeveloped, relying heavily on templates borrowed from adjacent domains rather than AI-specific guidance. ISO/IEC 42001, published in 2023, is being implemented in a market that has not yet developed the capability to implement it well.

WHY AI AMPLIFIES THE PROBLEM

A Domain That Demands Genuine Depth

AI is not a subject that yields to surface-level familiarity. It is one of the most technically and ethically complex domains that governance practitioners have ever been asked to manage, and it demands genuine, sustained competence across an unusually wide spectrum of disciplines. Understanding how a machine learning model produces its outputs, why it may behave unexpectedly, where its training data introduces bias, and how its decisions can be explained to those affected by them requires knowledge that takes years to develop, not days.

This reality stands in sharp contrast to how the training market has responded to demand for AI governance expertise. The dominant model, a four or five-day course, delivered either in person or online, covering the clause structure of ISO/IEC 42001 and its key concepts, is structurally incapable of producing the depth of competence that effective AI governance requires. A practitioner who completes such a course will understand the shape of the standard. They will not understand the substance of the domain it governs.

Attendance at a course is not the same as competence. In AI governance, the gap between the two is particularly consequential.

Online delivery compounds the problem further, removing the contextual discussion, practical application, and expert challenge that help practitioners develop genuine judgement rather than surface familiarity. Attendance at a course is not the same as competence. In AI governance, the gap between the two is particularly consequential.

MultidisciplinaryComplexity

Effective AI governance does not sit within a single discipline. It spans machine learning and data science, data governance, cybersecurity and information security, ethics and human rights, and legal and regulatory compliance. A practitioner who is an expert in information security risk management may have a limited understanding of model drift or algorithmic bias. A data scientist may be unfamiliar with control frameworks or audit evidence requirements. Few individuals, and fewer organizations, currently possess meaningful depth across all of these domains simultaneously.

This multidisciplinary requirement creates a genuine talent problem. Organizations cannot simply send their ISMS Manager on an ISO/IEC 42001 foundation course and consider their AI governance competence addressed. Governing AI responsibly requires a team with complementary expertise, supported by ongoing learning, practical experience, and access to specialist knowledge across technical, ethical, and regulatory dimensions. Building that capability takes time, investment, and a fundamentally different approach to professional development than the current market is offering.

Abstract and Evolving Requirements

ISO/IEC 42001 introduces requirements around concepts such as fairness, explainability, and transparency that are inherently difficult to operationalize. Unlike the requirement to encrypt data at rest, which has a clear technical implementation, a requirement to ensure that an AI system produces fair outcomes is context-dependent, contested in its definition, and still evolving in academic and regulatory literature. Turning these requirements into auditable controls demands a level of domain expertise and interpretive maturity that the market has not yet developed, and that a multi-day training course cannot credibly claim to provide.

The Speed-Capability Gap

Standards arrive before the capability to implement them exists. ISO/IEC 42001 was published in late 2023. Training programs qualified to prepare practitioners for it remain limited. Tooling ecosystems built around its specific requirements are only beginning to emerge. The market is responding to demand for AI governance expertise by supplying volume rather than depth, and the consequences of that trade-off are visible in the quality of implementations being produced.

BUSINESS IMPACT

What Organizations Experience

In practice, the structural weaknesses described above produce a recognizable pattern. Compliance programs become template-driven, with documentation produced to satisfy clause requirements rather than to reflect genuine operational controls. Control implementation is shallow, covering the most visible requirements while leaving harder-to-audit areas underdeveloped. Audit results become inconsistent, with findings varying significantly depending on which auditor or certification body conducts the assessment.

The Strategic Risk of False Assurance

The most significant business risk arising from this pattern is false assurance. An organisation that has achieved ISO/IEC 42001 certification through a superficial compliance programme may genuinely believe that its AI risks are managed, its governance is sound, and its regulatory exposure is contained. In reality, AI risks may remain unidentified or untreated, decisions may lack meaningful human oversight, and the controls that exist on paper may not be functioning as intended.

As regulators begin to rely on AI standards certifications as evidence of governance maturity, the consequences of false assurance become both operational and legal.

As regulators in jurisdictions including the EU begin to rely on AI standards certifications as evidence of the maturity of governance, the consequences of false assurance become both operational and legal. Certification that does not reflect genuine capability exposes organizations to regulatory action, reputational damage, and harm to individuals whose rights are affected by AI systems operating without adequate controls.

CLOSING THE GAP: A PRACTICAL MODEL

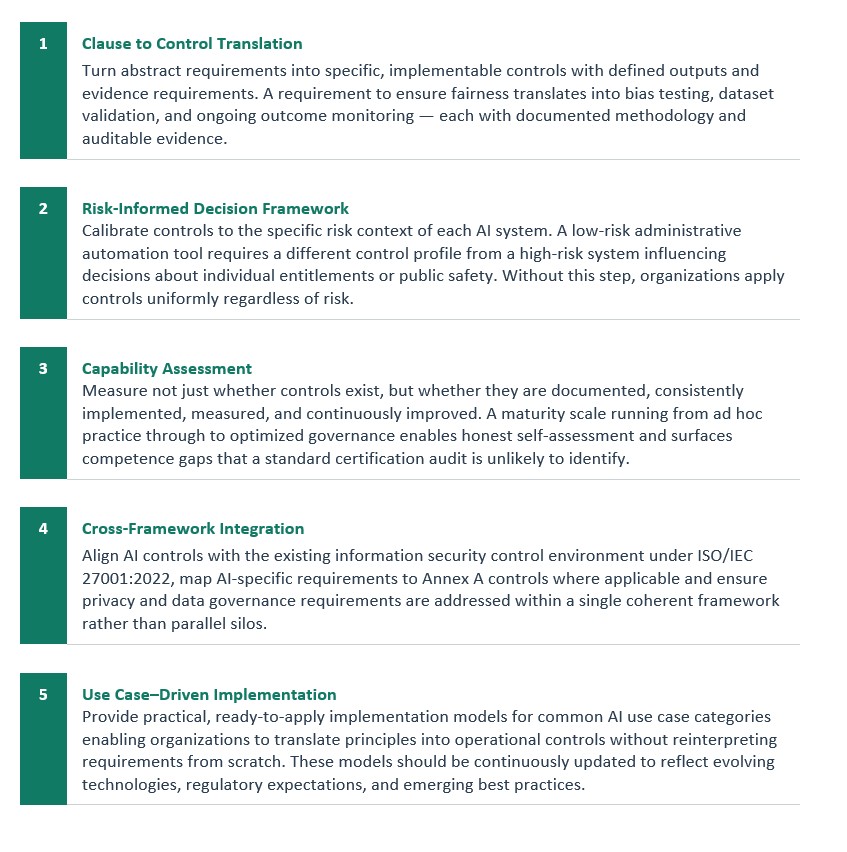

Addressing the structural weakness in the standards-to-competence pipeline requires more than better training or more detailed auditing. It requires capability and approach to interpreting standards, contextualizing requirements, measuring capability, and integrating AI governance with the broader control environment.

It also requires an honest acknowledgement that competence in AI governance is built over time through sustained investment, not awarded at the end of a training week.

ENABLING CAPABILITY WITH MYRA & COMPLIANCE MAPPER

The five-layer model described above is operationalized within the Myra Risk Engine and Compliance Mapper platform, which together provide the structured interpretation, traceability, and measurement capabilities needed to close the competence gap in practice.

— Structured mapping from standard requirements to specific controls, reducing interpretive variability and ensuring clause-to-control translation is consistent and documented.

— Full traceability from requirement through risk to control to evidence, satisfying auditor expectations and enabling organizations to reconstruct governance decisions at any point.

— An integrated risk model that aligns AI governance with cybersecurity and information security controls under ISO/IEC42001 and ISO/IEC 27001:2022 within a unified framework.

— Capability assessment tools that evaluate actual organizational performance, rather than simple pass/fail compliance, enabling more transparent self-assessment and driving targeted, meaningful improvement initiatives.

— Implementation reference models and scenario-based guidance through Compliance Mapper, with mappings maintained as standards, regulations, and technology evolve.

These tools do not replace the need for genuine competence, but they provide the structure and support that allow competence to be applied consistently and evidenced effectively.

RECOMMENDATIONS

For Organizations

— Reorient certification programs around genuine risk management rather than documentation compliance.

— Build multidisciplinary capability combining AI domain expertise with information security, ethics, legal, and audit skills. This is a prerequisite, not a nice-to-have.

— Invest in sustained professional development across the full spectrum of AI governance disciplines, rather than relying on short-format training to produce the depth the domain requires.

— Adopt a capability-based approach as a more accurate and meaningful indicator of progress than certification status alone.

For Consultants and Auditors

— Move beyond template-driven approaches that prioritize documentation over substance.

— Develop genuine AI domain expertise, not just familiarity with the standard's clause structure.

— Invest in technical knowledge of AI systems and ongoing engagement with developments in machine learning, data governance, and AI ethics.

— Apply structured interpretation frameworks consistently to reduce variability and improve the reliability of both implementation guidance and audit findings.

For Training Providers

— Be transparent about what a four- or five-day course does and does not develop in practitioners.

— Design learning pathways that extend well beyond foundation courses and reflect the sustained investment competence requires.

— Resist commercial pressure to position short-format programs as sufficient preparation for a domain of this complexity.

— Recognize that competence in AI governance is built through sustained learning, practical application, mentoring, and experience, not through attendance at a training event.

For Standards Bodies

— Supplement high-level standards with implementation guidance, reference control models, and competency frameworks that support consistent interpretation.

— Engage with the training and professional development ecosystem to help establish credible competency benchmarks for AI governance practitioners.

— Ensuring benchmarks reflect the genuine depth and breadth of knowledge the domain demands.

CONCLUSION

The challenge facing organizations, practitioners, and regulators is not the existence of AI governance standards. ISO/IEC 42001 represents a meaningful and necessary step toward responsible AI governance at scale. The challenge is that the pipeline connecting a published standard to genuine organisational capability is structurally weak, and that weakness is being compounded by a training market that is not equipped to develop the depth of competence that AI governance genuinely requires.

AI is complicated. It demands expertise that spans technical, ethical, legal, and operational disciplines. This expertise that takes time, sustained investment, and practical experience to develop. A multi-day training course, online or in-person, provides a foundation at best and false confidence at worst. The organizations, auditors, and consultants operating in this space need to be honest about this and to invest accordingly.

Closing the competence gap requires systematic work at every stage of the pipeline: structured interpretation that makes abstract requirements actionable,risk-based contextualization that calibrates controls to actual use case risk, maturity models that measure real capability rather than compliance status, integrated frameworks that connect AI governance to the broader control environment, and scenario-based guidance that supports consistent implementation in practice.

Need help navigating your risk

Get in touch. We'd love to help.

Questions about risk, ISO, compliance, or AI?