AI and Digital Trust

Managing the risks of intelligent systems

Artificial intelligence is rapidly becoming central to how organizations operate, compete, and communicate. But AI also introduces a new category of digital trust risk — one that existing security and compliance frameworks were not designed to address alone. This whitepaper examines eight critical AI risk domains and the governance controls required to ensure AI becomes a trust enabler, not a trust liability.

Executive Summary

Digital Trust — the confidence that stakeholders place in an organization's ability to protect data, make fair decisions, and behave ethically in digital environments — is increasingly shaped by how that organisation deploys artificial intelligence. AI offers extraordinary potential to enhance speed, accuracy, and scale across every business function. But it also introduces risks that, if unmanaged, can erode trust faster than any other technology.

Unlike traditional IT risks, AI risks are often invisible until they cause harm. A model that produces biased lending decisions, a system that drifts silently from its original performance baseline, or an automated process that cannot explain its conclusions to a regulator. These are not hypothetical scenarios. They are documented failures occurring in regulated industries today.

This whitepaper identifies and analyses eight principal AI risk domains relevant to digital trust: transparency, bias, privacy, security, accountability, automation bias, model drift, and ethical misuse. For each, it provides a structured risk statement, impact assessment, and catalog of recommended controls, aligned with major governance frameworks including ISO/IEC 27001:2022, ISO 42001, the EU AI Act, and NIST AI RMF.

The paper concludes with a mapping of AI risks to the six pillars of digital trust — security, privacy, reliability, transparency, accountability, and ethics — and a set of practical recommendations for organizations seeking to build AI governance that is proportionate, effective, and audit-ready.

AI can be a powerful enabler of digital trust, but only if organizations treat transparency, fairness, security, and governance as first-order design requirements, not afterthoughts.

1. The Digital Trust Imperative

Digital trust is not a single capability — it is a composite of properties that an organisation must demonstrate consistently across its digital interactions. These include the security of systems and data, the fairness and accuracy of automated decisions, the transparency of processes, and the ethical conduct of the organisation in the digital realm.

As AI systems become embedded in customer-facing services, internal workflows, and critical infrastructure, the conditions under which digital trust is granted or withdrawn are changing fundamentally. Stakeholders — customers, regulators, employees, and business partners — increasingly evaluate trust not just based on whether an organisation protects their data, but on whether its AI systems make decisions that are fair, explainable, and subject to meaningful human governance.

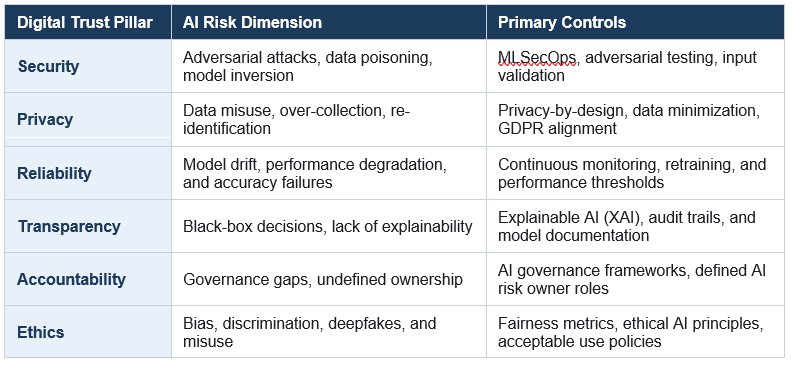

The six pillars of digital trust directly intersect with AI deployment:

- Security — Can the AI system be compromised, manipulated, or exploited?

- Privacy — Does the AI respect data minimization, purpose limitation, and individual rights?

- Reliability — Does the AI perform consistently and accurately over time?

- Transparency — Can the AI explain its decisions to users, oversight personnel, and regulators?

- Accountability — Is there clear ownership and governance for AI decisions and their consequences?

- Ethics — Are AI outputs fair, unbiased, and free from deliberate misuse?

Each of these pillars is threatened by specific AI failure modes. The sections that follow examine these failure modes in detail.

2. Eight Critical AI Risk Domains

The following risk profiles represent the principal categories of AI-related risk with direct implications for digital trust. Each is presented with a structured risk statement, impact analysis, and recommended controls drawn from leading governance frameworks.

01 Lack of Transparency — The Black Box Problem

Risk: AI-driven decisions lack explainability, reducing trust and creating regulatory exposure where organizations cannot demonstrate the basis for automated outcomes.

Impacts

- Regulatory breaches — AI Act, GDPR Article 22 (Automated Decision Making), and sector-specific accountability requirements

- Customer and stakeholder distrust when decisions affecting individuals cannot be explained

- Legal challenges arising from automated decision disputes and right-to-explanation claims

Controls

- Explainable AI (XAI) techniques — LIME, SHAP, and model-specific interpretability tools

- Model documentation and decision audit trails maintained throughout the model lifecycle

- Alignment with ISO/IEC 27001:2022 A.5.1, A.8.2 and ISO 42001 transparency requirements

- Human-readable output summaries for decisions affecting individuals

02 Bias and Discrimination

Risk: AI systems trained on historically biased datasets may produce outputs that systematically disadvantage individuals or groups on the basis of protected characteristics.

Impacts

- Reputational damage and public loss of confidence in AI-driven services

- Legal liability under equality, anti-discrimination, and consumer protection legislation

- Regulatory sanctions where biased AI outputs constitute unlawful discrimination

- Erosion of stakeholder trust across customer, employee, and investor communities

Controls

- Bias testing and fairness metrics applied at development, validation, and post-deployment stages

- Diverse, representative training datasets with documented provenance and known limitations

- Ethical AI governance frameworks with explicit fairness objectives and measurement criteria

- Ongoing monitoring for demographic disparity in model outputs

03 Data Privacy and Misuse

Risk: AI systems requiring large-scale personal data processing create elevated risks of privacy violations through insufficient consent mechanisms, data over-collection, or unauthorized secondary use.

Impacts

- Regulatory breaches under GDPR and equivalent privacy regimes, including enforcement action and fines

- Data leakage or re-identification of individuals from aggregated or anonymized datasets

- Loss of customer trust where AI-driven personalization is perceived as intrusive or opaque

Controls

- Privacy-by-design embedded in AI system architecture from the outset

- Data minimization — collecting and retaining only what is strictly necessary for the declared purpose

- Secure data lifecycle management covering collection, processing, storage, and deletion

- DPIAs for high-risk AI processing activities (ref. GDPR and ISO 27701)

- Integration with ISO 27001 Annex A.5 and A.8 controls

04 Security Vulnerabilities in AI Systems

Risk: AI models introduce novel attack surfaces not addressed by conventional cybersecurity controls, including data poisoning, model inversion, and adversarial manipulation.

Impacts

- Integrity loss — manipulated model outputs affecting decisions, processes, or recommendations

- Operational disruption through adversarial attacks causing model failure or erratic behavior

- Exfiltration of training data or intellectual property through model inversion techniques

- Compromised decision-making in critical systems — financial, healthcare, infrastructure

Controls

- Secure model lifecycle management (MLSecOps) from data ingestion through to deployment

- Adversarial testing and red-teaming exercises targeting AI-specific attack vectors

- Input validation and anomaly detection to identify and reject manipulated inputs

- Access controls on model artefacts, training data, and inference endpoints

- Alignment with NIST AI RMF Govern, Map, Measure, and Manage functions

05 Accountability and Governance Gaps

Risk: Absence of clearly defined ownership, oversight structures, and decision trails for AI systems creates governance failures and prevents effective regulatory response.

Impacts

- Non-compliance with AI regulations requiring designated accountability for high-risk AI systems

- Inadequate incident response — no clear owner means no clear escalation path when AI fails

- Inability to demonstrate audit-readiness to regulators, auditors, or affected individuals

- Diffusion of responsibility enabling AI-related harms to go unaddressed

Controls

- Formal AI governance frameworks with board-level visibility and executive accountability

- Defined AI risk owner roles with documented responsibilities across the model lifecycle

- Model approval, change management, and periodic review processes

- AI registers documenting systems in use, purpose, risk classification, and oversight arrangements

- Alignment with EU AI Act conformity assessment requirements (Article 43)

06 Over-Reliance on AI — Automation Bias

Risk: Excessive deference to AI outputs without adequate human scrutiny degrades decision quality and removes the accountability that human oversight is intended to provide.

Impacts

- Poor decision quality in domains where AI outputs are accepted without validation

- Safety risks in critical sectors — healthcare, aviation, financial services, law enforcement

- Reduced human accountability as responsibility is perceived to rest with the AI system

- Regulatory exposure where meaningful human oversight is a mandated requirement

Controls

- Human-in-the-loop controls designed to require active, informed engagement, not passive sign-off

- Decision validation checkpoints calibrated to the risk level of the AI application

- Training and awareness programs to build AI literacy and critical evaluation skills

- Workflow design providing oversight staff with sufficient time, information, and authority to challenge AI outputs

07 Model Drift and Performance Degradation

Risk: AI model performance degrades over time as real-world data patterns diverge from training data, producing outputs that are inaccurate, unreliable, or no longer fit for purpose.

Impacts

- Business errors arising from outdated model outputs used as the basis for operational decisions

- Compliance breaches where degraded performance causes AI-driven processes to fall below required standards

- Erosion of organisational confidence in AI systems, reducing adoption and investment

- Undetected drift in high-stakes systems — credit scoring, fraud detection, clinical decision support

Controls

- Continuous monitoring of model performance metrics with automated alerting on threshold breaches

- Scheduled and trigger-based model retraining with version control and change documentation

- Defined performance thresholds below which systems are automatically flagged or taken offline

- Data pipeline monitoring to detect upstream changes that may affect model inputs

08 Ethical Concerns and Deliberate Misuse

Risk: AI capabilities — including generative AI, synthetic media, and behavioral profiling — may be weaponized for deception, surveillance, or manipulation, causing harm at scale.

Impacts

- Brand and reputational damage where the organisation is associated with harmful AI applications

- Legal consequences under emerging legislation targeting deepfakes, synthetic fraud, and AI-enabled harassment

- Societal harm and erosion of public trust in digital information and AI-driven systems

- Regulatory action where misuse constitutes a violation of AI Act prohibited practices

Controls

- Acceptable use policies governing what AI systems may and may not be used for, with enforcement mechanisms

- Ethical AI principles embedded in procurement, development, and deployment decision-making

- Monitoring for misuse scenarios — including detection of synthetic content generation or off-purpose use

- Whistleblower and escalation channels for reporting perceived AI misuse internally

- Alignment with EU AI Act prohibited practices provisions (Article 5)

3. Mapping AI Risks to Digital Trust Pillars

The following table maps each of the six digital trust pillars to its primary AI risk dimension and the associated control categories. This mapping is intended to support organizations in aligning their AI governance activities with trust-oriented outcomes, demonstrating alignment to regulators, auditors, and stakeholders.

4. Building an AI Trust Governance Framework

Addressing the eight risk domains identified in this paper requires more than point-in-time controls. It requires a coherent AI governance framework — one that is proportionate to the organization's AI risk profile, integrated with existing compliance and risk management structures, and capable of evolving as AI capabilities and regulatory expectations develop.

4.1 Establish an AI risk taxonomy and register

Organizations should maintain a structured register of all AI systems in use, classifying each by risk level (drawing on the EU AI Act's risk tiering where applicable), purpose, data processed, and oversight arrangements. The register provides the evidential foundation for all downstream governance activities.

4.2 Define clear accountability structures

Each AI system should have a named risk owner — an individual with the authority and competence to make decisions about the system's deployment, modification, and retirement. Accountability should be documented, not assumed. Board-level oversight of the AI risk portfolio should be established where AI is material to the organization's operations or obligations.

4.3 Embed privacy and security by design

AI systems should be subject to security and privacy review from the point of design, not retrospectively. This means applying threat modelling, DPIAs, and adversarial testing as standard elements of the AI development and procurement lifecycle, not as post-deployment remediation activities.

4.4 Invest in explainability and documentation

For AI systems used in decisions that affect individuals — credit, insurance, employment, healthcare, law enforcement — explainability is both a regulatory requirement and a trust imperative. Organizations should invest in XAI tooling, maintain model cards documenting system capabilities and limitations, and ensure that competent human oversight personnel can interpret and challenge AI outputs effectively.

4.5 Build operational monitoring and response capabilities

AI governance is not a one-time activity. Model performance must be monitored continuously, with defined thresholds triggering review, retraining, or decommissioning. Incident response plans should explicitly address AI failure scenarios including bias events, security incidents involving AI systems, and model drift affecting regulated processes.

4.6 Align with regulatory frameworks proactively

The regulatory landscape for AI is maturing rapidly. The EU AI Act is in force; ISO 42001 provides a certification framework; sector-specific AI guidance is emerging in financial services, healthcare, and critical infrastructure. Organizations that build AI governance aligned to these frameworks now will be better positioned for compliance, better protected from enforcement risk, and better able to demonstrate trustworthiness to their stakeholders.

5. Regulatory Landscape Overview

The following regulatory and standards frameworks are directly relevant to AI risk management and digital trust governance:

- EU AI Act (2024) — The world's first comprehensive AI regulation. Establishes risk tiers for AI systems, mandates conformity assessments for high-risk applications, and prohibits certain AI practices outright. Requires designated accountability, transparency, and human oversight for systems affecting individuals.

- ISO 42001:2023 — The international AI Management System standard. Provides a structured framework for establishing, implementing, maintaining, and continually improving AI governance. Designed to complement ISO 27001 and other management system standards.

- ISO/IEC 27001:2022 — The information security management standard. Annex A controls — organisational (domain 5) and technology measures (domain 8) — apply directly to AI systems handling personal or sensitive data. The 2022 revision includes new controls relevant to cloud, supplier, and data lifecycle management.

- ISO/IEC 27701:2025 — A privacy information management standard providing a framework for managing and protecting personal data. Applies to AI systems handling PII, covering lawful processing, data subject rights, and responsibilities for controllers and processors across the data lifecycle.

- NIST AI RMF (2023) — The US National Institute of Standards and Technology AI Risk Management Framework. Organizes AI risk activities across four functions: Govern, Map, Measure, and Manage. Widely adopted as a reference framework in regulated industries.

- GDPR and equivalent regimes — Privacy regulations impose obligations on AI systems processing personal data — including purpose limitation, data minimization, the right to explanation for automated decisions (Article 22), and mandatory DPIAs for high-risk processing.

Conclusion

AI is not inherently a threat to digital trust, but it is inherently a test of it. Every AI system an organisation deploys is a statement about its values: whether it prioritizes transparency over opacity, fairness over efficiency shortcuts, genuine accountability over the appearance of oversight.

The eight risk domains examined in this paper are not theoretical. They are active failure modes documented across industries, each with the potential to cause regulatory exposure, stakeholder harm, and lasting reputational damage. The organizations that manage these risks effectively, not through compliance theater, but through genuine governance, will be the ones that earn and retain the trust their AI systems are capable of generating.

The path forward is clear: build AI governance that is proportionate, proactive, and aligned with the frameworks that regulators, auditors, and stakeholders increasingly expect. Treat explainability, fairness, and accountability not as constraints on AI adoption but as the conditions under which AI earns the right to be trusted.

Questions about risk, ISO, compliance, or AI?